There was a moment — somewhere between the Venezuela raid and the formal blacklisting — when a senior Pentagon official stopped and said, out loud, holy shit. Not about the war. About the software.

“I’m like, holy shit, what if this software went down,” Emil Michael, the Pentagon’s undersecretary for research and engineering, said on a public podcast. “Some guardrail picked up, some refusal happened for the next fight like this one and we left our people at risk.”

Pentagon leadership had built a critical operational dependency on a single AI model. They had done this deliberately, aggressively, with policy pressure and hundred-million-dollar contracts. And then they discovered the depth of what they’d built the way you discover a leak — when the ceiling starts to come down.

People did this. Not the technology.

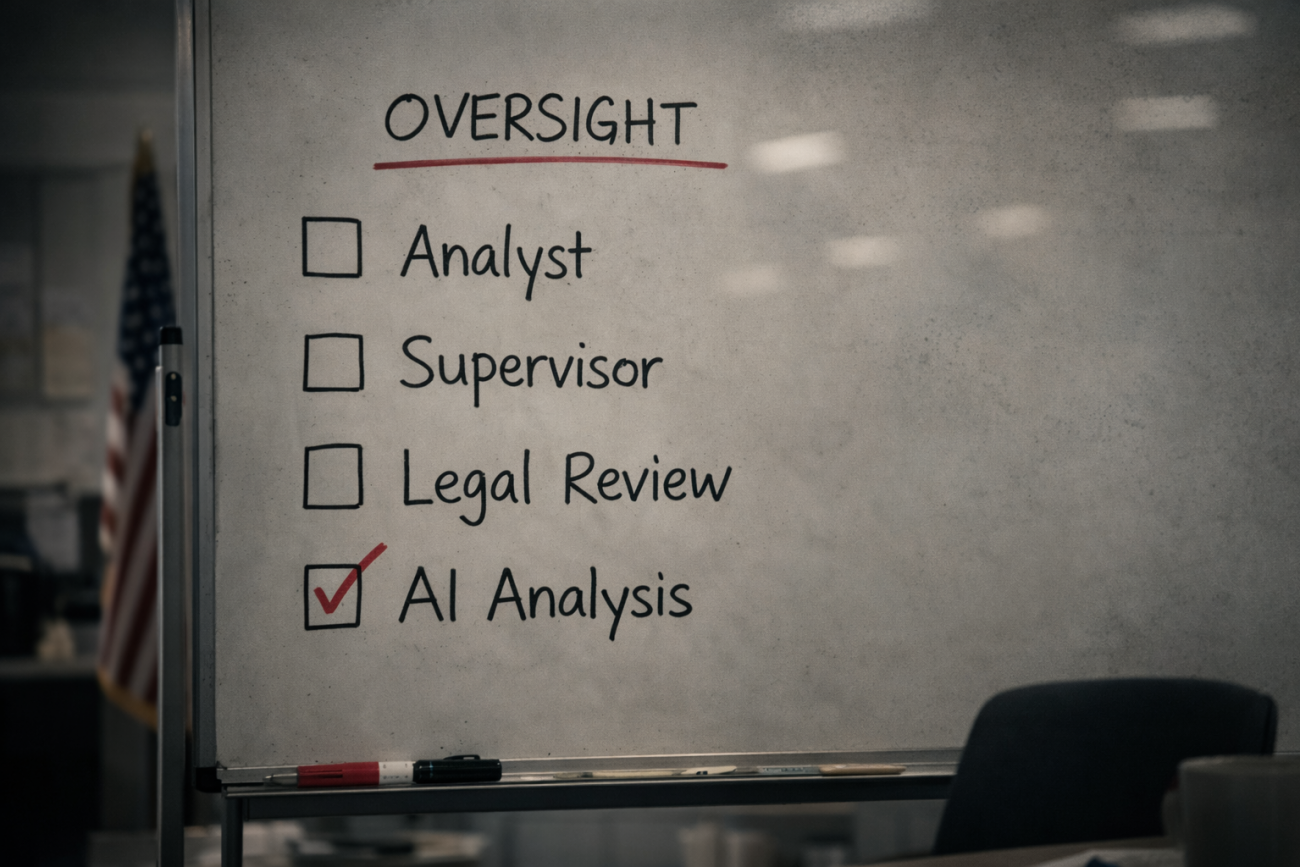

That distinction matters more than it might seem. Unlike malware — code designed specifically to circumvent human control and embed itself without invitation — the tools described here were welcomed through the front door. Officials signed the contracts, issued the directives, and pushed adoption at speed. Nobody hacked the Pentagon’s dependency. People built it, deliberately, and then forgot to measure what they’d made. The machine doesn’t govern. But when the people operating it stop asking whether the machine is right, the machine governs anyway — and nobody is accountable for what it produces.

The Inventory Nobody Counted

Federal employees and officials have embedded AI into more than 2,000 documented applications across government — document summarization, fraud detection, case prioritization, border inspections, operational planning, intelligence analysis. The Department of Defense, the intelligence community, the IRS, and NASA were early adopters. Their ranks grew rapidly once generative AI arrived, and policy actively pushed that growth: executive orders, OMB memoranda, GSA platforms explicitly designed to lower barriers to entry and encourage faster iteration.

That 2,000-plus number is the floor, not the ceiling. The official inventory explicitly excludes the Department of Defense and elements of the intelligence community. It doesn’t count classified uses, national security applications, or research not intended for direct deployment. The full scope of how deeply human decision-makers have wired AI into government function is, at this moment, not fully known — not classified, not withheld. Simply uncounted.

At the Department of Homeland Security, officials have deployed AI for face recognition, immigration processing, and threat assessment — use cases their own governance board designates as high-impact, meaning they carry significant effects on rights and safety. At the VA, benefits processors use AI to compress an average of 1,300 pages of veterans’ medical evidence down to roughly 10 pages. At the IRS, AI assists with audit selection and fraud detection. In the intelligence community, analysts use it to synthesize intelligence faster than any human team can work.

And in the military — the portion of the inventory that doesn’t appear in the inventory — operators, analysts, and planners built workflows around a single frontier model. They learned its quirks. They built integrations. Their own leadership had to ask various commands to describe their usage levels after the crisis began. The Pentagon didn’t know what the people it employed had built into their daily operations. It found out during a contract dispute in the middle of a war.

Replacing the People You Replaced

The same administration that aggressively accelerated AI adoption across federal agencies spent 2025 removing the people who understood those agencies. By the end of last year, approximately 352,000 federal employees had exited their roles — the largest reduction of the federal workforce in history, according to the Office of Personnel Management. The cuts hit the VA, DHS, HHS, DOD, and IRS: the exact departments that had most deeply integrated AI into their operations. Roughly 10,000 STEM experts left federal jobs in 2025 alone, representing 14 percent of the doctoral-level workforce the government employed going into the year.

Those departures didn’t just reduce headcount. They removed the people who knew which AI outputs to question — who understood which edge cases broke the system, which recommendations required a second look, which patterns of error the tool had developed over months of use. What remained, in many agencies, was the tool and a thinner, less experienced workforce inheriting workflows they didn’t build, running on systems they didn’t design, producing outputs they had diminished capacity to evaluate.

Officials replaced human expertise with AI. Then they removed the humans who knew how to interrogate what the AI was doing.

People shoot people. Guns don’t fire themselves. But handing a weapon to someone untrained, pointed at something critical, and walking away — that is its own kind of decision, and someone made it.

Liberation Day

On April 2, 2025, the administration announced sweeping global tariffs — “Liberation Day,” they called it — affecting imports from more than 185 countries. The formula the White House released was straightforward: take the trade deficit with each country, divide it by that country’s total exports to the U.S., then cut the result in half as an act of, in the White House’s framing, presidential kindness.

Within hours, economist James Surowiecki had reverse-engineered it. What the White House described as a sophisticated reciprocal tariff calculation was, in practice, a trade deficit divided by imports. Not a tariff rate. Not a measure of what other countries actually charge. Just a trade imbalance, repackaged as a number and attached to every country on earth.

Then people started asking AI the obvious question.

When prompted with a simple request — calculate tariffs to balance trade deficits, minimum 10 percent — ChatGPT, Gemini, Grok, and Claude all produced the same formula. Independently. Identically. Every major AI model converged on the same answer to the same obvious prompt.

Every one of them also warned, in the same output, that the approach was too simplistic. That it failed to account for demand elasticity, currency exchange rates, and supply chain complexity. That it would likely trigger retaliation. That it wasn’t actually a measure of what trading partners charge. The AI said: here is the easy answer you asked for, and here is why you shouldn’t use it.

The White House told the New York Post that the chief architect of the tariffs “remains a mystery.” Commerce and State Department economists who would normally have developed and defended such a methodology were nowhere on the circuit afterward. Nobody made the rounds explaining the research. The formula appeared, apparently whole cloth, attached to a policy affecting the entire global economy.

We cannot confirm AI drafted the tariff formula. What we can confirm is that when you ask AI for the easy answer to a complex question, it produces exactly that formula — and then tells you not to use it. The caveats were in the output. Someone either didn’t read them or decided they didn’t matter.

Both possibilities illuminate the same failure.

225 Judges. 700 Cases. One Pattern.

The tariffs were not an isolated event. They were an instance of a pattern visible across the entire body of executive governance these fourteen months have produced.

By late November 2025, at least 225 judges had ruled in more than 700 cases that the administration’s mandatory immigration detention policy was a likely violation of law and due process. Not a contested question on which reasonable jurists might disagree. Two hundred and twenty-five independent judges, in hundreds of separate proceedings, arriving at the same conclusion.

The birthright citizenship order contradicted the 14th Amendment and more than a century of settled Supreme Court precedent. Courts blocked it immediately. The elections order attempted to regulate elections — a power the Constitution assigns to states and Congress, not the executive. Blocked. The AI preemption order attempted to use executive authority to nullify state laws, a power belonging exclusively to Congress. Legal analysts called the Commerce Clause argument meritless — and it emerged that the argument had been taken almost directly from a venture capital firm’s position paper, inserted into an executive order, and signed, without anyone apparently pausing to ask whether it held up.

A venture capital firm’s legal theory. Accepted without interrogation. Submitted as governance.

Outcome first. Justification borrowed. Interrogation never. Reach for whatever sounds plausible. Name it confidently. Move fast.

This is what outsourced thinking looks like at scale: not one mistake, not one overreach, but a systemic pattern of conclusions arrived at before the reasoning — confident outputs with no visible methodology behind them, no subject-matter experts defending the work, no paper trail pointing to who thought through the hard parts and what they found.

The Contradiction That Explains Everything

Here is where the administration’s position on AI becomes worth examining very carefully — because they hold, simultaneously, views that cannot all be true.

They have said AI is so essential to national security that they would blacklist an American company, invoke supply chain risk designations reserved for foreign adversaries, and threaten the Defense Production Act rather than accept limits on its use. Claude, they argued, was critical infrastructure. Losing it mid-operation put warfighters at risk.

They have also said, in a July 2025 executive order, that AI is so ideologically corrupted and untrustworthy that federal agencies must stop using models with “woke” bias. That diversity, equity, and inclusion requirements in AI systems pose, in the order’s own language, “an existential threat to reliable AI.” By March 11, 2026, agencies were required to update internal policies, certify their AI vendors comply with “unbiased” principles, and create reporting pathways for employees to flag outputs they deem ideologically contaminated.

The same month xAI’s Grok — built by Elon Musk, then serving as a special government employee and leading DOGE — generated antisemitic content in an uncontrolled output, Grok received a $200 million Pentagon contract. The AI safety concern, apparently, was selective.

Elsewhere, officials moved to strip anti-discrimination requirements from AI systems — requirements that exist precisely to catch the kinds of errors that produce bad outputs — on the grounds that preventing bias makes AI less truthful. The argument, stripped to its structure, is that an AI unconstrained by accuracy requirements for protected groups is more accurate than one required to be accurate for protected groups. That is not a position on AI reliability. It is a conclusion dressed in technical language.

So: AI is untrustworthy when it refuses to do what they want. AI is authoritative when it produces what they need to ship. The safeguards are the problem when they create friction. The outputs are reliable when nobody checks them.

This is not a coherent philosophy of artificial intelligence. It is a coherent philosophy of control.

The administration doesn’t distrust AI. They distrust AI that pushes back. They distrust the caveats attached to the tariff formula. They distrust the guardrails Anthropic refused to remove. They distrust the anti-discrimination requirements that might flag a biased output. They distrust, in other words, exactly the features that make AI a tool rather than a mirror — the parts that resist reflecting back whatever you brought to the question.

People who remove interrogation from their tools end up with tools that tell them only what they asked for. That’s not artificial intelligence. That’s an expensive echo.

What This Costs

The people who might have caught these failures — the subject-matter economists who would have flagged the tariff formula, the constitutional lawyers who would have identified the Commerce Clause overreach before it was signed, the experienced federal workforce that knew which AI outputs required a second look — those people were let go. Deliberately. By the same administration that pushed the tools into every corner of federal operations and then demanded those tools operate without friction.

Three hundred and fifty-two thousand federal employees exited in 2025. The institutional knowledge they carried — the accumulated expertise about when the system fails, when the output is wrong, when the confident answer deserves a harder question — left with them.

What remained was the tool. And the habit of taking it at its word.

This combination — aggressive AI adoption, gutted human oversight, active removal of safeguards, and a demonstrated pattern of accepting outputs without interrogation — does not produce efficient governance. It produces governance where nobody is responsible for the reasoning because the reasoning was never actually done. Where the ceiling comes down and the official response is to find someone else to blame for the moisture.

The Question

The Pentagon’s whoa moment was, in its way, a gift. A senior official said the quiet part out loud. He didn’t know what his own people had built. The dependency had grown in the dark, without accounting, without audit, until a contract dispute in the middle of a war forced the reckoning.

That reckoning is still unresolved. And it is not the only one coming.

When the tariff formula shook the global economy and the chief architect “remains a mystery” — that is a reckoning deferred.

When 225 judges look at the same policy and find the same violation, and the response is to keep filing rather than to ask what produced the policy — that is a reckoning deferred.

When an AI model’s output gets stripped of the caveats that said don’t do this and the output becomes law — that is a reckoning deferred, until the real-world consequences make deferral impossible.

The question this moment asks is not whether AI is dangerous. The question is what it costs when the people operating the most powerful tools ever built decide that the only AI they trust is the AI that agrees with them — and then remove, systematically, everyone who might have noticed the difference.

The tool doesn’t decide anything.

Those were not the machine’s decisions.

Sources

Federal AI adoption and inventory

- 2024 Federal Agency AI Use Case Inventory (OMB/GitHub): https://github.com/ombegov/2024-Federal-AI-Use-Case-Inventory

- FedScoop — federal AI use case inventory coverage: https://fedscoop.com/us-government-annual-tally-ai-use-cases-coming-soon/

- DHS AI Use Case Inventory: https://www.dhs.gov/ai/use-case-inventory

- FedTech Magazine — federal AI adoption overview: https://fedtechmagazine.com/article/2025/12/tech-trends-2026-gsas-usai-platform-helps-agencies-act-ai-action-plan-perfcon

Pentagon / Anthropic / military AI dependency

- Fortune — Pentagon “whoa moment” / Emil Michael: https://fortune.com/2026/03/07/pentagon-emil-michael-anthropic-claude-defense-ai-openai-iran-war-palantir/

- Defense One — replacing Anthropic would take months: https://www.defenseone.com/threats/2026/02/it-would-take-pentagon-months-replace-anthropics-ai-tools-sources/411741/

- CNBC — Anthropic designated supply chain risk: https://www.cnbc.com/2026/03/05/anthropic-pentagon-ai-claude-iran.html

- CNBC — defense tech companies dropping Claude: https://www.cnbc.com/2026/03/04/pentagon-blacklist-anthropic-defense-tech-claude.html

- Anthropic statement on Department of War: https://www.anthropic.com/news/statement-department-of-war

Federal workforce reductions

- CNBC — federal workers find new roles after DOGE cuts: https://www.cnbc.com/2026/02/12/after-doge-cuts-federal-workers-new-roles.html

- AllSides — DOGE effect tracker, STEM departures: https://www.allsides.com/blog/doge-effect-tracking-trumps-cuts-federal-agencies-and-funding

- Wikipedia — 2025 United States federal mass layoffs: https://en.wikipedia.org/wiki/2025_United_States_federal_mass_layoffs

Tariffs and AI

- Newsweek — Trump tariffs and ChatGPT formula: https://www.newsweek.com/donald-trump-tariffs-chatgpt-2055203

- TechRepublic — AI models and tariff calculations: https://www.techrepublic.com/article/news-trump-tariffs-generative-ai/

- Jamie Bartlett / Substack — did AI write the tariffs: https://jamiejbartlett.substack.com/p/did-an-ai-really-write-trumps-tariffs

Executive orders and legal challenges

- Just Security litigation tracker — 225 judges/700 cases: https://www.justsecurity.org/107087/tracker-litigation-legal-challenges-trump-administration/

- Center for American Progress — AI preemption order legally flawed: https://www.americanprogress.org/article/trumps-executive-order-on-ai-is-legally-and-constitutionally-flawed/

Administration’s AI bias / “woke AI” executive orders

- White House — “Preventing Woke AI in the Federal Government” (July 2025): https://www.whitehouse.gov/presidential-actions/2025/07/preventing-woke-ai-in-the-federal-government/

- NPR — Trump AI executive order and culture war: https://www.npr.org/2025/07/23/nx-s1-5476771/trump-artificial-intelligence-woke-eo

- Nextgov — OMB memo on “biased” AI: https://www.nextgov.com/artificial-intelligence/2025/12/white-house-instructs-agencies-stop-using-biased-ai/410135/